We have no doubt that as experienced teachers you have not only seen many multiple choice test questions but that you have created them yourself.

And keeping in the kitchen metaphor, there’s no absolutely perfect recipe, there are methods and ingredients that maybe can be improved, ever so slightly.

We found a worthy set of suggestions from the Vanderbilt University Center for Teaching, “Writing Good Multiple Choice Test Questions.” And even better, as it is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License, we can not only refer you to it, but repurpose parts of it here.

Cite this guide: Brame, C., (2013) Writing good multiple choice test questions. Retrieved July 1, 2020 from https://cft.vanderbilt.edu/guides-sub-pages/writing-good-multiple-choice-test-questions/.

While your H5P cooking will include more question types than multiple choice, keep their suggestions in mind. And also consider that this guide is written more towards assessments, while our work is on practice exercises; what is not included are suggestions for providing feedback (that’s another post).

We will maintain the terminology from the Vanderbilt guide for the parts of the multiple choice item:

A multiple choice item consists of a problem, known as the stem, and a list of suggested solutions, known as alternatives. The alternatives consist of one correct or best alternative, which is the answer, and incorrect or inferior alternatives, known as distractors.

Constructing an Effective Stem

A stem should be meaningful on it’s own and present a definite problem focused on a learning outcome. Less meaningful stems lead to students trying to infer a response from question. Here is their examples of a non-meaningful stem(cleverly baked here in the kitchen as H5P!)

Compare this to a re-written stem that is more direct and stands on its own as a question:

Avoid including extraneous information in a stem; in this example, they are using content better left in the context before the question is used.

Avoid negatively framed stems unless it is specifically required by an outcome. These questions are harder to understand, and lead students to look for the “trick” answer. If a negatively worded stem is needed (e.g. to identify a dangerous substance of practice), use italics or capitalization to highlight the negative phrase.

Avoid this kind of stem:

Instead try writing negative worded stems like this:

The last suggestion for stems is that they should be complete sentences, avoid stems that have a fill in the blank in the middle (besides there are different H5P content types for that). A partial sentence forces a learner to keep that in their brains while testing out different answers, an unnecessary increase in cognitive load.

First, try this stem that has an interior blank:

Compare it to this better version:

Constructing Effective Alternatives

All alternatives or the answer choices should be plausible responses to the stem. “The function of the incorrect alternatives is to serve as distractors,which should be selected by students who did not achieve the learning outcome but ignored by students who did achieve the learning outcome. Alternatives that are implausible don’t serve as functional distractors and thus should not be used. Common student errors provide the best source of distractors.”

This example has several implausible and easily discarded distractors.

The Vanderbilt guide also suggests avoiding overly wordy alternatives (long multilined sentences) as they are more an indicator of reading ability than achieving a learning objective.

Alternatives should be mutually exclusive; overlapping responses indicate that they are some kind of trick to fool the learner, and thus, becomes more of a total distraction of the goal.

Try this example:

Also, alternatives should be homogenous (parallel) in content. If not, one answer can stand out as an obvious answer just from noting the pattern of wording. Try this example:

As you can see, the structure and patterns of alternatives should give clues to experienced test-takers. They often know that correct answers often have differences in length, grammar, formatting, and language choice than incorrect ones. Alternatives should:

- have grammar that is consistent with the stem

- be parallel in form

- be of similar length

- use similar language (all of them like or all of them unlike the language of the textbook).

Also, avoid alternatives like “all of the above” and “none of the above”:

When “all of the above” is used as an answer, test-takers who can identify more than one alternative as correct can select the correct answer even if unsure about other alternative(s). When “none of the above” is used as an alternative, test-takers who can eliminate a single option can thereby eliminate a second option. In either case, students can use partial knowledge to arrive at a correct answer.

In addition, avoid alternatives that feature 3 single options A, B, and C followed by ones such as “A and B”, “B and C”, “A and C” as “a sophisticated test-taker can use partial knowledge to achieve a correct answer.”

These types of formats are not needed in H5P when you can use the options to accept multiple answers as correct (checkbox vs radio buttons).

Alternatives should be displayed in a logical order (alphabetical or numerical) to downplay bias towards certain positions (note that in H5P alternatives can be set to be displayed in randomized order; know also when to turn that option off).

For this problem about a chemical reaction, the answers should follow the logic order of the equation:

There is nothing magical about the 4 alternative multiple choice item:

The number of alternatives can vary among items as long as all alternatives are plausible. Plausible alternatives serve as functional distractors, which are those chosen by students that have not achieved the objective but ignored by students that have achieved the objective. There is little difference in difficulty, discrimination, and test score reliability among items containing two, three, and four distractors.

For more suggestions about complexity of question items and considerations for writing items that test higher-order thinking, see the full article.

The authors also provide a condensed version of the tips as a two page handout.

See also Constructing Written Test Questions For the Basic and Clinical Sciences (National Board of Medical Examiners).

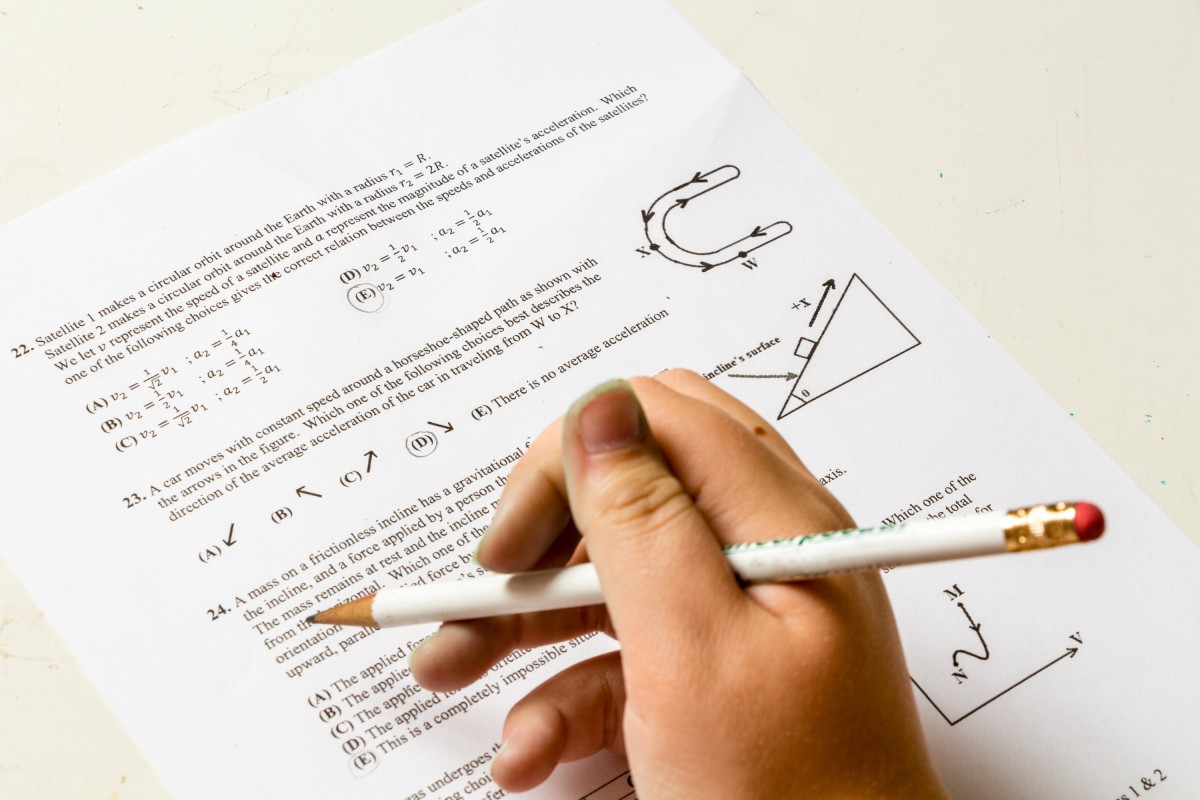

Image Credit: PxHere photo by Ridam Sharma placed into the public domain using Creative Commons CC0